Previous Blog on API Gateways (and APIs).

A service mesh is a follow-on pattern built around API Gateway services. The focus of a service mesh is service connectivity between two or more services. Every time a service wants to make a network request to another service (for example, a monolith consuming the database or a microservice consuming another microservice), we want to take care of that network request by making it more secure and observable, among other concerns.

Service mesh as a pattern can be applied on any architecture (i.e., monolithic or microservice- oriented) and on any platform (i.e., VMs, containers, Kubernetes). The service mesh does not implement new patterns over an API Gateway, but extends them.

Service policies around network and data control include: security, observability and error handling within an application and improving connectivity of outbound or inbound network requests. Using an API Gateway usually means that an application team writes these use cases by implementing more code into their services. Across different teams and programming languages, this would mean a lot of development effort of the same services, with little reuse and creating security risks for the organization in managing networking connectivity.

The service mesh pattern allows us to ‘outsource’ the network management of inbound and outbound requests made by any service including 3rd party applications. This is achieved by using an out of process proxy, which manages every inbound and outbound network request.

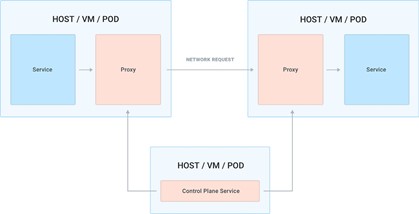

This proxy sits on the execution path of every request and it’s therefore a data plane process, and since one of the use-cases is implementing end-to-end TLS encryption and observability, you run one instance of the proxy alongside every service so that you can seamlessly implement those features without requiring the application teams to do too much work and abstracting those concerns away from them.

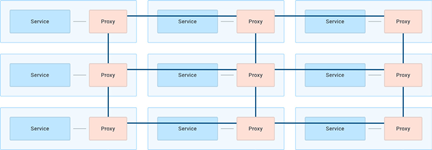

This data plane proxy runs alongside every replica of every service which gives a decentralized deployment (as opposed to the API gateway pattern, which is a centralized deployment). To keep latency at a minimum, we can run the data plane proxy on the same machine (VM, host, pod) as the service that we are running. The proxy acts as a load balancer for incoming requests, and a reverse proxy for outgoing.

To implement this decentralized proxy service we will need a control plane to enforce policies and propagate the correct configurations.

The service mesh pattern, therefore, is more invasive than the API gateway pattern because it requires us to deploy a data plane proxy next to each instance of every service, requiring us to update our CI/CD jobs in a substantial way when deploying our applications.

With service mesh, we are fundamentally dealing with one primary use case.

Use Case: Service Connectivity

By outsourcing the network management to a third-party proxy application, the teams can avoid implementing network management in their own services. The proxy can then implement features like mutual TLS encryption, identity, routing, logging, tracing, load-balancing and so on for every service and workload that we deploy, including third-party services like databases that our organization is adopting but not building from scratch.

Since service connectivity within the organization will run on a large number of protocols, a complete service mesh implementation will ideally support not just HTTP but also any other TCP traffic, regardless of connections travelling north-south or east-west. In this context, service mesh supports a broader range of services and implements L4/L7 traffic policies, whereas API gateways have historically been more focused on L7 policies only.

From a conceptual standpoint, service mesh has a very simple view of the workloads that are running in our systems: everything is a service, and services can talk to each other. Because an API gateway is also a service that receives requests and makes requests, an API gateway would just be a service among other services in a mesh.

The data plane proxies are effectively client load-balancers so they can route outgoing requests to other proxies (and therefore other services), the control plane of a service mesh must know the address of each proxy so that the L4/ L7 routing capability can be performed. The address can be associated with any meta-data, like the service name. By doing so, a service mesh essentially provides a built-in service discovery that doesn’t necessarily require a third-party solution. A service discovery tool can still be used to communicate outside of the mesh but most likely not for the traffic that goes inside the mesh.